Ian Salmon is a Regulation expert who currently sits on the FIX Community MiFID clock sync panel and works with networks performance solution provider, Accedian. In the past, Ian has headed up global marketing for ITRS, and been Head of the MiFID programme and Global Product Marketing Director for Fidessa.

The narrative surrounding MiFID II so far hasn’t exactly been a positive one and few in the financial services industry have cast ESMA as the hero of the story. While the industry accepts the need for changes to certain practices, MiFID II represents the most far-reaching change ever to hit the industry in one fell swoop. Change of that magnitude is scary; it’s also potentially expensive and difficult. So some trepidation is understandable, however, is it possible we’re telling the wrong story? Could it be that, if approached holistically, some of the changes mandated by this impending regulation could actually result, through ‘unintended consequences’, in positives for the business?

It’s widely acknowledged that information is power but knowledge is king.

Ian Salmon

In the case of time-stamping, MiFID II could actually empower the business to access and analyse incredibly valuable and powerful key metrics not previously available - in near real time - giving the recipient business owners far greater visibility of the performance/utilisation of their greatest assets. In fact, maybe the move to MiFID II could be less a white elephant and more a white knight.

The challenge of timestamping

The MiFID II technical standards lay out the requirements for timestamping in Article 50 on clock synchronisation. However, the clocks being synchronised span many different arms of the business, so it also touches the trade and transaction reporting requirements in Articles 6, 7,10,11 and 26, and on record keeping under Articles 17 and 25. The crux of the matter is that, in order to ensure good trading hygiene and to produce and store a granular, timestamped history of a trade, ESMA requires the entire trading technology estate to be perfectly synchronised to Coordinated Universal Time (UTC) with less than 100 uSec divergence.

If you are unlucky enough to fall under ESMA’s definition of High Frequency Trading (HFT) (which it seems most brokers of any scale will be), then you will need to timestamp ALL investment decisions as audit points in the lifecycle of your Execution flow to 1 uSec accuracy. Not only that, you will also need to be able to reconstruct the entire transaction lifecycle historically, on demand, in order to demonstrate appropriateness of trading. It’s an intimidating challenge and one likely to throw up a number of ‘unintended consequences’.

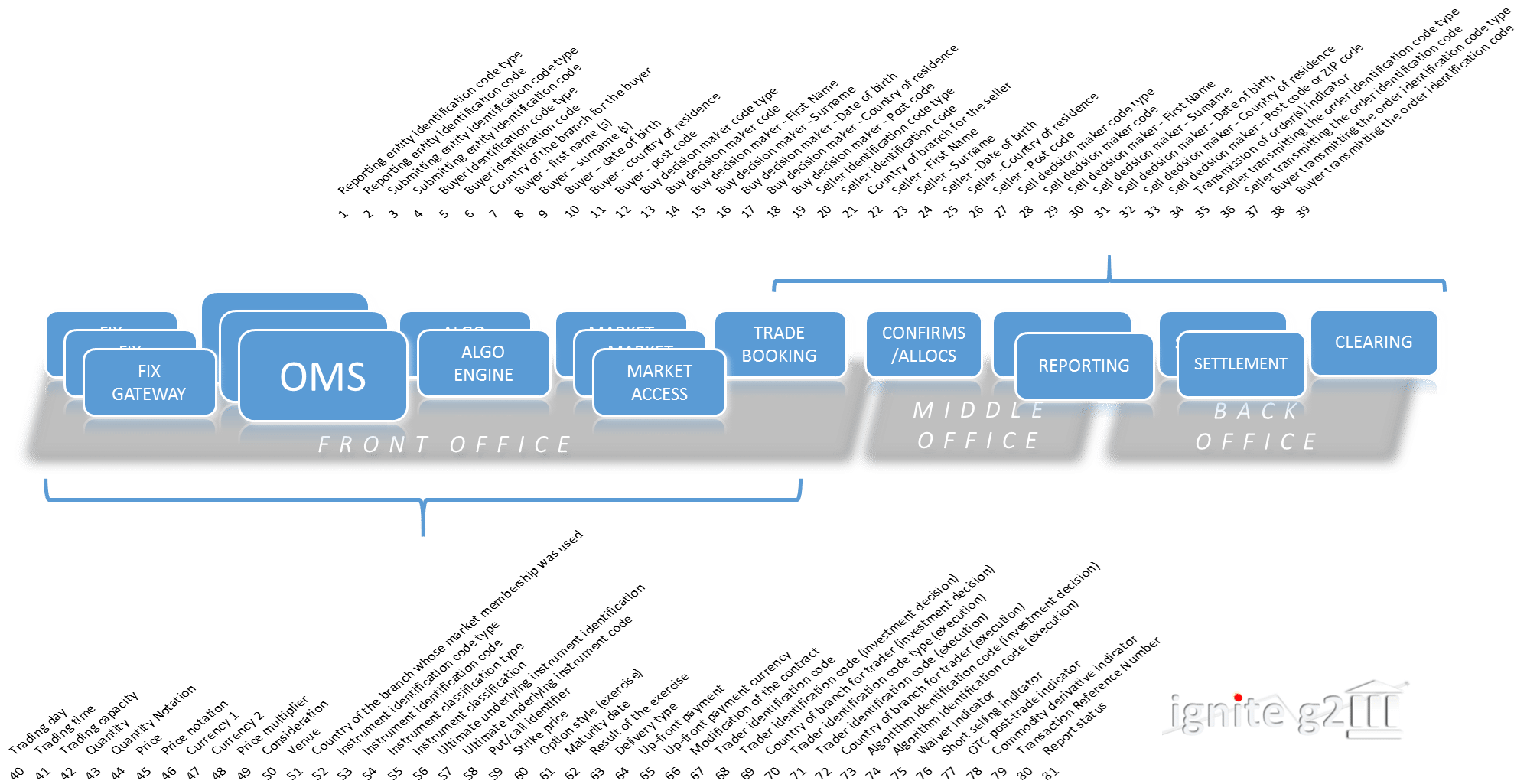

The rudimentary diagram below shows a typical low/no-touch equities flow, giving an idea of just how many events need to be recorded. MiFID II mandates 60+ fields, and requires that firms store that data for upwards of five years post-execution. Equities are one thing but this applies whether cross-asset, for both on and off-exchange trading globally for any FCA regulated entity.

The solution?

Let’s assume for a moment that the industry meets the challenge of creating a single, industry-wide time source (UTC) to synchronise to (the latest draft says that GPS is acceptable, so it’s not so far-fetched an assumption). That still leaves us with the problem of timestamping, collecting, consolidating and piecing back together potentially millions of execution flows over thousands of instruments for hundreds of clients and with little/no impact on the underlying business. All for no purpose other than to be compliant and to be able to demonstrate timeliness, completeness and accuracy of trading.

it would also be hugely expensive to address these issues at the application level

The first solution that comes to mind is to delve headfirst into the myriad of technology platforms and underlying application stacks that make up a modern trading organisation, and try to fine-tune, at the application level, in order to get all these applications in synch. On first sight, it’s a tempting solution, because it allows the business to deal with their part of the flow on a piecemeal basis, breaking the overall task down into seemingly more manageable chunks.

However, this would be a mistake. Not only would this be an incredibly difficult technical feat, it would also be hugely expensive to address these issues at the application level. Given the disparate nature of the underlying technologies, attempting to then glue those individual efforts together into a single audit record and with the level of accuracy mandated in MiFID II is unlikely to be successful. Post-deadline, the consequences of failure could be far-reaching. Fortunately, there is a viable alternative.

Network level timestamping

Instead, the financial services industry should do what it’s done successfully many times in the past, which is to look over the fence at adjacent industries and to consider the technologies and solutions that they have developed in order to solve similar business challenges.

In this case, finance can take the lead from the telco world, which has faced similar challenges and met them with specialised instrumentation at the network level, eliminating the need to tinker intrusively with each and every application stack. This involves deploying small and exceptional cost pervasive FPGA devices at the network level. These collect precisely targeted data from deep within the packet(s) of specified data flows and then forward it losslessly for analysis and with zero impact on the underlying network architecture. This already exists and has been successfully operating behind the scenes in the North American telco industry, where over 80% of all mobile phone calls touch such technology. It’s fast, accurate technology and cheaper than application-layer solutions.

By deploying a network-layer solution, companies can build an accurate, synchronised birds-eye view of the data

The recent news that GPS will be accepted as the method for connecting to the planned central industry-wide time source also points to a solution proven in the telco world, where GPS connectivity has been standard for many years.

By deploying a network-layer solution, companies can build an accurate, synchronised birds-eye view of the data, enabling uSec timestamping and deep packet analysis as part of everyday business operations at an affordable price, and at minimal risk to the business.

Accenture recently quoted in its ‘Partnership Fund for New York’ study an increased FinTech spend of some $5bn (from $3bn to $8bn), from 2013 to 2018, a large part of it driving ‘RegTech’ initiatives. ‘Regulations,’ they say, ‘have created a complex compliance burden for financial services companies, which can be solved in large part by new technologies’. What if the market were able to take at least some of that $5Bn and use it in a way that not only meets regulatory objectives but that also allows regulated entities to find new and innovative ways of differentiating themselves and their services?

And those pesky ‘unintended consequences’?

But few stories are so simple - there’s always a twist in the plot. With regulations, those twists tend to be unintended consequences, and the same goes for the systems and solutions firms put in place to comply with them. What those consequences are, depends largely on how a business tackles the problem.

If it plumped for the application-layer solution, unintended consequences may be lag or downtime caused by excess strain on the system. This in addition to the huge expense and difficulty.

However, if a business went about preparing for MiFID II timestamping requirements by installing non-disruptive instrumentation at the network layer, then the unintended consequences might be quite different. That business (and its clients) then has complete transparency about what/where/how and for whom they are executing their flow. That data can also demonstrate that a business is meeting or exceeding Service Level Agreements and deliver powerful performance metrics – which are an increasingly important part of the sales and reporting process.

MiFID II could actually empower the business to access and analyse incredibly valuable and powerful key metrics not previously available - in near real time

Implemented on any single Investment Bank or Broker’s (IBB) execution flows cross asset globally, the possible uses of that instrumented data are simply endless and could include key trading metrics, such as total consideration traded and fill rates per day, instrument, or market for that client. Further it could be ranked relative to that client’s performance over the previous hour(s), day(s), week(s) and month(s) on per instrument/per market/per account basis etc. This not only gives the IBB and potentially their client (if delivered back to them through a white labelled portal) complete transparency around what they have traded and how, but it also gives the broker empirical evidence of the nature/direction of that client’s utilisation. Are they executing more/less flow, smaller/larger clip sizes, more/less instruments, wider/narrower market coverage?

It’s widely acknowledged that information is power but knowledge is king. So, by giving the business the management information it needs to understand its clients’ activities it is then able to take and use that information to act wisely and with precision in order to ensure persistent and progressive strategic relationships.

All this means that, when MiFID II does eventually roll around, the business will have little else to do other than what it does every day, and the system that allows it to do so can deliver powerful business benefits in the meantime. If the financial services industry looks at timestamping with fresh eyes and borrows from the telco world, it might just find that MiFID II timestamping is less a white elephant than a white knight.