By David Murray, Chief Business Development Officer, Corvil.

David Murray, Corvil

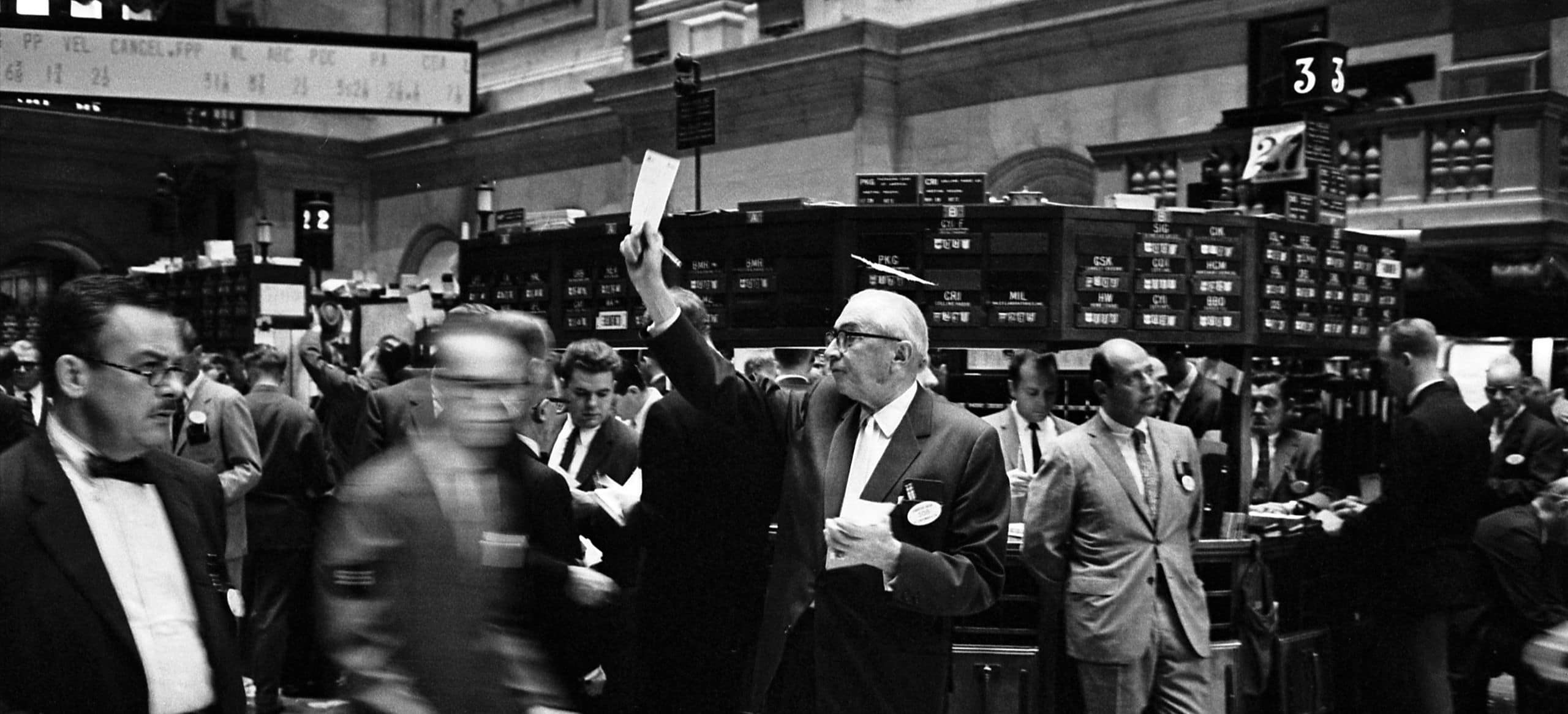

Traditional traders, the famous and infamous Wolves of Wall Street, run the financial markets no more. For some time, it has been the “quants”—the mathematicians, statisticians, or even theoretical physicists or data scientists—that control the market. The quants are the ones that design, deploy, and truly understand the algorithms that now account for more than 70% of equities movement everyday.

The algorithms decide what to buy and sell as well as where to buy and sell. At their core, algorithms are complicated sets of rules with the ability to quickly ingest huge volumes of data and quickly decide and even execute the optimal course of action. These complex rule sets are made into software programs that speed through all this in fractions of a second — far beyond the limits of human performance.

Once an algorithm is created, it becomes difficult (but not impossible) for an external actor to change it. Accordingly, like all software programs, algorithms and algorithmic business are vulnerable to attacks. Someone with the right access, whether ill-gotten or not, could theoretically sabotage an algorithm from the inside. However, much more likely and much more probable are the scenarios we’ve already seen in financial markets - inadvertent gaps in coding logic or unanticipated conditions, or even human error, could have global consequences.

Further, even without changing the software itself, someone with an understanding of how a particular algorithm works could manipulate it by introducing certain inputs. The algorithm will act on these inputs as expected, but could end up doing some very dangerous things simply by following its own 'rules'.

It is at this juncture that the advantages of the machines become the dangers. Such is the reach of machines and markets today and the reason why we must understand what is happening even when it is happening a million times faster than humans can operate.

The speed and complexity of this machine-based system have added billions of dollars to the world’s economy while saving millions of labor-hours in efficiency. But they have introduced a blind spot that digital business has still not been able to completely illuminate.

This unintended consequence of those velocities and intricacies have led to the loss of transparency and understanding about what is happening with data flow, customer experience, and the underlying technology interworkings. Needless to say, it is very dangerous when companies operate with minimal insight into their digital, automated, or, especially, algorithmic businesses. This is exacerbated when machines can render the business insolvent in a matter of minutes. No traditional trader, and indeed no human being, can keep track of everything that is happening to any meaningful degree of understanding.

Companies have attempted (or been required by regulation) to deploy a 'circuit breaker' or kill switch, an 'overseer' software that has the power to pull the plug when it sees anomalous conditions.

Theoretically, this is a good and necessary safety net. But in an increasingly interconnected global reality, shutting down any one part can be disruptive to many other processes, causing unintentional and unforeseen consequences as certain sources of Liquidity are temporarily stalled.

A simple analogy would be a car that shuts completely off if something anomalous occurs: the passenger is protected, but the traffic backup could be substantial, and would seem to be an overreaction if the issue was, say, a malfunctioning radio. And if someone found a way to affect even the air conditioners for some model of car, they could turn the I-95 into a parking lot.

For example, if some hacktivist organization wished to target today’s financial markets, it would not be out of the question for them to either masquerade as a legitimate trading organization to flood the market (through multiple venues) with bogus orders. This would likely trigger multiple circuit breakers and cause the markets to shut down for some period.

As all participants' algorithms are triggered to sell or buy at specific thresholds, they just need to trip that trigger to begin a chain reaction, leading to an avalanche before anyone has a chance to react. Even though the circuit breaker overseers may have stopped the patient zero, the damage done by the large-scale disruption to markets would still be quite grave.

Although market surveillance and Analytics exist to detect and determine abusive activities, this often happens long after the original incidents, and often functions more as a deterrent than a preventative measure. Even the original 2010 flash crash took months of unraveling and unwinding before regulators were satisfied they understood the causes.

The only way to govern the proper function of algorithms is by watching over the machines, what they are actually saying to each other and their subsequent responses. Then, we must evaluate whether the types and nature of communications, as well as their content, are appropriate and expected. And we need to do this in real-time. A tall order, but this it is the critical task for the finance industry, and very soon, for every industry.

Why should we be so worried about the algorithmic wolves and getting this right in finance? In the evolution of electronic to digital to algorithmic business, we are now in an age where most of our interactions, even far outside of finance, are influenced by algorithms. The advertisements and offers we see, the behavior of our mobile phones, pricing of products and services we browse and buy, and much more consequential matters such as the decisions of self-driving cars, recommended treatment and triage paths in hospitals, and the news and stories we are served.

In the very near future, our ability to accurately surveil algorithmic business, and to respond quickly (or even be proactive) will be paramount in ensuring that business - and the world - keeps on running.